Edition 8: What lies ahead

The flatness of social media, inequality visualized, technology's next Great Leap Forward

Another week, another crazy twist in the thrill ride that is 2020. You’re likely inundated with the news of Trump’s COVID diagnosis (and conflicting reports of his condition), and the whole fiasco really drives home the frantic reality of minute-to-minute reporting and commentary that the internet has ushered in.

This week, the weather has once again cooled and cleared in San Francisco, returning a sense of normalcy after the heat waves and smoky air. Yesterday I ventured to Costco for the first time since shelter in place took hold, and it was packed - if it weren’t for the masks you might not notice a pandemic at all. People really are anxious to get back to business as usual.

Ever since I read Wait But Why’s deep dive on Neuralink (linked below), I’ve been consumed with thoughts of the far future. My reading has been colored by those big themes this week. Read on!

Orthographic media

Imagine those colorful cubes in the orthographic projection above as tweets: all the same size, taking up the same amount of space on the canvas, even though some are way off in the distance while others brush the virtual camera’s lens. Maybe this is a flavor of context collapse: the standardization of all events, no matter how big or small, delightful or traumatic, to fit the same mashed-together timeline.

This post applies a thought provoking analogy to social media in the internet age. While profit incentives are certainly to blame for the shortening of our attention spans and radicalization of our viewpoints, another problem is the persistent lack of context in online spaces.

While Twitter, Facebook, Reddit et al provide effectively curated user experiences, temporal and spatial context cues have fallen by the wayside along with the physical newspaper.

Before electronic media, news was attenuated by the friction and delay of transmission and reproduction. When it arrived on your doorstep, a report of a far-off event had an “amplitude” that helped you judge whether or not it mattered to you and/or the world.

When all news, every breaking update, every trend is laid out on the same playing field, it’s hard to tell what matters to you personally. And although your feed is customized to your interests, the self-reinforcing nature of the algorithms tends towards serving you what you want to see versus what is actually relevant to you, ie what you need to see.

The internet has been an incredible democratizing function, and a major force for good and equality - there’s no question about it. But the orthographic nature of exposure has come at the cost of certain localized perspectives, whose loss we are only beginning to grapple with.

Life certainly felt simpler before smartphones and social media were widespread. Now that Pandora’s box has burst wide open, regulation will inevitably need to catch up to technology - as Google and Facebook particularly fall under increasing scrutiny, I expect this to be a major theme in the tech world this coming decade.

Extensive Data Shows Punishing Reach of Racism for Black Boys

The study, based on anonymous earnings and demographic data for virtually all Americans now in their late 30s, debunks a number of other widely held hypotheses about income inequality. Gaps persisted even when black and white boys grew up in families with the same income, similar family structures, similar education levels and even similar levels of accumulated wealth.

As the Black Lives Matter movement continues to stand in the forefront of the national consciousness, it’s important to note that the systemic issues it highlights have persisted out of the spotlight for decades.

There’s a lot of data in this article, masterfully visualized to tell the same uncomfortable story many ways: race is inextricably attached to one’s ability to succeed in America. Black Americans are disproportionately likely to be incarcerated, unmarried, and impoverished.

Another interesting takeaway:

Asian-Americans earn more in adulthood than whites who were raised in families with similar incomes. But that advantage largely disappears when the researchers look only at children whose parents were born in the United States. Non-immigrant Asian-Americans fare about as well in the economy as whites.

Despite all the ways the system failed Black Americans, Asian-Americans seem to have found equal footing as Whites, strictly speaking in terms of income mobility. In fact, children of first-generation Asian immigrants fared best of all races, giving credence to the Asian idea of America as a land of opportunity. It remains to be seen if this historical trend continues.

Magic Leap Tried to Create an Alternate Reality. Its Founder Was Already in One

After Magic Leap’s $2,300 headset bombed, the startup narrowed its focus to professional applications, tried unsuccessfully to sell the company and fired more than half of its staff. Investors wrote down their stakes by an average of about 94% over a 12-month period ending in June, a steeper decline than WeWork, according to data collected by Zanbato, a research firm that tracks institutional investors.

I remember learning about Magic Leap years ago as a wide-eyed high school student. The comparisons to early Apple abounded, but it was more like General Magic - a great technological leap forward, a venture far ahead of its time. It was really unclear what the company was actually making (something involving augmented reality), but the hype was coming from such important investors that it seemed like something truly revolutionary was coming.

Like Theranos, it ended up being mostly smoke and mirrors. But the dream that it captured - the next leap forward for personal technology - is alive and well.

We already have phones in our pockets, smart watches on our wrists, voice assistants. Smart TVs, smart thermostats, smart refrigerators. What does a leap forward from here look like? To quickly recap, the availability of computing for everyday individuals has evolved from:

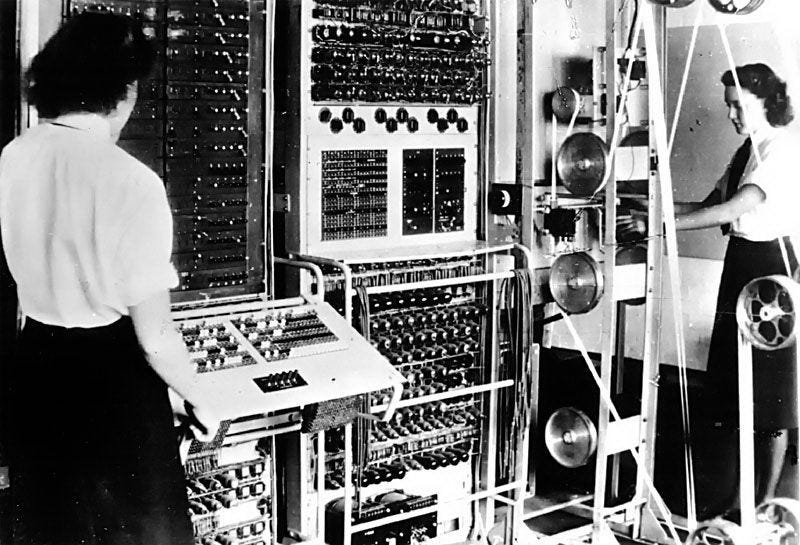

Room sized units available only to a select few. Prohibitively expensive, impossible to move. Interface: punch cards, eventually keyboards.

Early desktops that were designed for a single user at a time. Still too esoteric and expensive for any normal person to reasonably want and own.

Early desktops that were the beginning of the personal computer era - including early versions of the now commonplace mouse and keyboard interface.

Personal computers finally began to go mainstream in the 1980s, offering everyday people the ability to interface with the digital world for the very first time. Even so, connectivity was often limited to one per family, if available at all.

Laptops were a major stride in terms of portability, but still limited the user to a trackpad/keyboard interface. That said, this was the first time people could experience computing on the go - in effect, carrying the digital world with them for the first time.

2008 saw Steve Jobs introduce us to the smartphone, which is the era of computing we live in today - our phones are always with us, making us always-on and always-connected to the great expanse of the software world. The internet goes with us wherever we go.

Through developments in virtual and augmented reality (Google Glass, Oculus, Magic Leap, etc), we have even blurred the line between real and digital, but we are effectively stopped here - technology is something we carry with us, keep in our pockets, strap onto our wrists and heads. It surrounds us, in the form of Alexa by Amazon, Siri by Apple. But this is the limit of personal computing, the line between real and digital, human and high tech. Tech is deeply personal, but ultimately something in our environment.

Or is it? What is the next great leap in personal computing?

Neuralink and the Brain’s Magical Future

Neuralink’s brain-computer interface is one contender for where we go from here. The personal computer becomes indistinguishable from the person - the inevitable endgame for our ever shrinking technology and ever available digital selves. The human and the machine, intertwined. It could lead to the most revolutionary era since the advent of humankind.

While the world in which we all have cybernetic implants and direct telepathic connections to a shared global consciousness are undoubtedly years (decades?) away, this eventuality may be closer (and less frightening) than we think. This incredibly thorough and well-researched Wait But Why article has been one of my absolute favorite reads of the year, maybe one of the most inspiring things I’ve read in my life.

You may be skeptical about a chip in your brain, at least as skeptical as the first patient who received a pacemaker, or the first person to receive a cochlear implant. The applications for such a brain interface will be medical at first, after all - for example, restoring mobility to the paralyzed. But its true potential lies in network effects of learning, not dissimilar from how artificial intelligence has exploded in potency in recent years as it learns from its own learnings. Imagine if, rather than being bound by the necessity of reading, you could simply download a university education?

In fact, it’s clear that artificial intelligence is soon going to surpass our collective human intelligence, like it or not.

This is what keeps Elon up at night. He sees it as only a matter of time before superintelligent AI rises up on this planet—and when that happens, he believes that it’s critical that we don’t end up as part of “everyone else.”

That’s why, in a future world made up of AI and everyone else, he thinks we have only one good option:

To be AI.